How Do AI Hearing Aids Work?

For the past three decades, hearing aids have been stuck in an era of incrementalism. Each year, manufacturers released new models that offered longer battery life, greater Bluetooth connectivity, and other nice-to-have features; however, the biggest challenge for wearers remained untouched: the ability to hear a friend’s voice in a crowded room.

Now, this is changing. The rapid advancement of Artificial intelligence (AI) capabilities makes it possible to solve this long-held challenge. AI hearing aids are finally making it possible for wearers to stay connected to conversations — even in noisy places.

Why hearing speech in noise is so difficult for people with hearing loss

To understand the AI breakthrough, it’s first important to understand what AI is solving that no hearing aid could before: understanding speech in noise.

Understanding speech clearly in a noisy place requires a healthy connection between the ear and the brain. When we are young and our hearing is healthy, discerning speech from noise feels easy. The brain and ears possess an uncanny ability to work in tandem to filter out the "unimportant" sounds. But as our ears age, they stop providing the brain with clear, real-time signals. Simultaneously, the aging brain becomes less adept at processing the degraded information it does receive.

In most cases, the high-frequency hair cells in our ears are the first to go. These carry the signals for sharp consonants like “s,” “th,” “f,” and “t,” which are crucial for speech clarity. Without them, words become blurry. This degradation forces the brain into a state of constant overdrive that leaves people with hearing loss feeling exhausted after a social event.

Why conventional hearing aids fail in noise

For thirty years, the hearing aid industry tried to fix this blurry signal by using conventional digital signal processing (DSP). This architecture relies on the physics of sound—frequency, amplitude, and timing—to decide what to amplify.

As technology progressed, engineers developed denoising algorithms that looked for statistical patterns. Human speech is rhythmic and has natural pauses, so the algorithms were programmed to prioritize "speech-like" sounds over steady, mechanical noises like the hum of an air conditioner.

However, this approach hits a ceiling the moment you step into a social setting. The fundamental issue is that conventional algorithms can measure the physics of a sound, but they cannot determine what the sound actually is. To a traditional processor, any sound that is not a steady background hum looks like speech.

Because these processors rely on the physical characteristics of a sound wave, they cannot tell the difference between a voice and a clattering plate. Both sounds pulse and change in similar ways, so the hearing aid treats them both as important. It amplifies the clinking silverware and the laughter from the next table right along with the person you are trying to hear, creating a chaotic wall of sound.

AI hearing aids finally help wearers understand speech in noisy places

Using AI to process sound changes what is possible. Conventional sound processing relies on fixed rules to decide what to amplify, but AI can actually understand what to amplify based on content.

Advanced AI models trained on millions of examples of speech and noise recognize the subtle patterns of human voices, allowing them to identify speech even in the most difficult environments. For example, in a crowded room where multiple voices overlap, the AI can pick out a target voice and suppress the others.

This approach mimics how the brain of a healthy-hearing person naturally works. A healthy auditory system can more easily isolate a speaker’s voice even in a crowded room. When hearing is impaired, the brain receives a blurry, incomplete signal and has to work much harder to fill in the missing frequencies.

By identifying the speech first, the AI can boost the specific sounds the listener is missing without amplifying the distracting noise. This delivers a clear, high-contrast signal to the brain, allowing the wearer to follow a conversation with significantly less effort and mental strain.

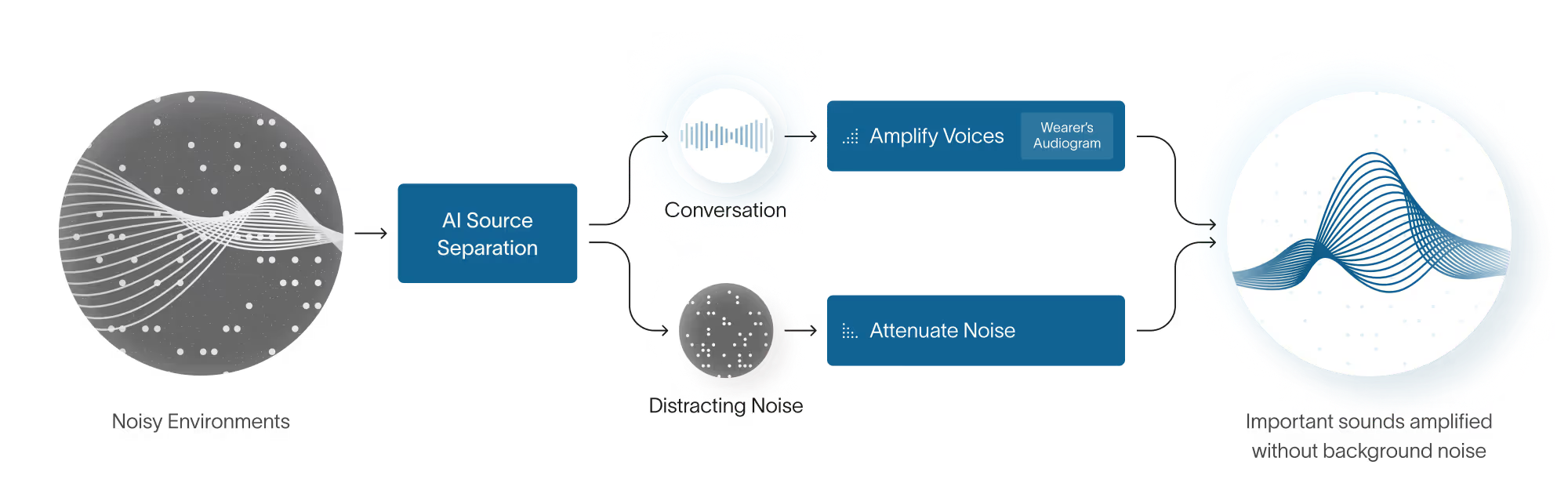

This is how Fortell AI Hearing Aids work. To solve the challenge of understanding speech in noise, Fortell moved away from the conventional model to build an entirely new, AI-native architecture. In this system, the AI analyzes incoming sounds and uses its training to decide which sounds are the speech you want to hear and which are distracting background noise.

Once AI has isolated the target voice and the background noise, it separates them into separate tracks and attenuates the background noise. Then, both tracks are recombined and prescriptive amplification is applied to match the wearer’s specific hearing needs. This process allows the system to boost speech without also boosting the distracting background noise so the wearer can hear speech clearly.

Not all AI is created equal

“AI” has become a marketing buzzword in the hearing aid industry, but it doesn’t always mean that AI is applied in the way described above.

Many hearing aid brands that market themselves as AI hearing aids use AI in a peripheral way. They still use the conventional DSP architecture with AI simply layered on top. Usually, these hearing aids use AI for scene classification, using tiny neural networks to tweak the parameters of legacy signal processing pipelines.

In these instances, the AI isn’t being used to separate speech from noise; rather, it’s being used to automate the mode switches in a traditional signal processing system.

The evidence: Fortell’s AI Hearing Aids vs other AI hearing aids

Fortell’s AI was put to the test against the leading AI hearing aid in a double-blind, randomized controlled trial overseen by researchers at NYU Langone.

The study focused on speech intelligibility, the ability to understand words clearly in loud environments. A target talker was positioned directly in front of the participant, while competing "distracting talkers" played from loudspeakers positioned behind them.

Researchers tested participants across three increasingly difficult signal-to-noise Ratio (SNR) levels. SNR measures the level of a desired signal (often speech) to the level of background noise.

- Moderate noise (+6 dB SNR): Equivalent to a quiet café.

- Challenging noise (0 dB SNR): Comparable to a busy restaurant or family dinner.

- Extremely challenging noise (-6 dB SNR): Similar to a crowded bar, where the background noise is significantly louder than the person speaking.

The results were staggering, particularly in the most difficult environments where conventional hearing aids often fail:

- 19x higher odds of understanding words correctly with Fortell vs. the leading AI hearing aid in the most challenging acoustic environments

- 98% of background noise eliminated with Fortell

How to assess the AI in hearing aids: 4 questions for your audiologist

Not all AI is designed to solve the same problem. To determine which category a hearing aid falls into, ask these five targeted questions at your next hearing consultation.

1. Does the AI perform true speech and noise separation?

Ask if the device performs source separation. This is the ability to identify speech and noise as two distinct objects and treat them independently, allowing the system to turn down the noise path while cleanly amplifying the speech path.

2. Is the AI powerful enough to separate speech from noise in real-time without a delay?

A high-performance device should complete its calculations in milliseconds. If the AI only works on a "delay" or requires a smartphone to do the heavy lifting, it isn't true on-device source separation.

3. Can the AI run continuously for a full day on a single charge?

True real-time AI is power-hungry. In many conventional hearing aids, the high-level AI features drain the battery so quickly (often in 4–8 hours) that the hearing aid automatically turns the AI off to save power.

4. Is the AI validated by a blinded randomized controlled clinical trial?

Many brands use internal "satisfaction surveys." These are not clinical evidence. Ask for a blinded randomized controlled trial. Specifically, look for data on "word recognition in noise." If they can’t show you a study where the device was tested against its top competitors, the claim is likely marketing, not science.

5. Can I hear a real-world demo (not a simulation)?

Many demos are pre-recorded studio clips designed to sound perfect. Ask for a live demo in a truly noisy environment.

Meeting the promise of AI

For decades, the standard response to hearing loss was simply to turn up the volume. But as millions of wearers have discovered, louder noise is not the same as clearer speech. By replacing the traditional rule-based processor with an AI-native engine, Fortell AI Hearing Aids finally solve the problem of understanding speech in noise.