Why Can't I Hear in Restaurants? The Science Behind the Struggle

Your daughter made the reservation months ago. It’s one of those celebrated New York spots, a beautiful restaurant with soaring ceilings and industrial glass. You don’t have the heart to tell her that while the menu looks incredible, the environment will be too challenging for you—even with your hearing aids.

Twenty minutes into the meal, your "social nod" inevitably kicks in. It’s that gesture you use to feign engagement when you’ve actually lost the thread of a conversation. By the time the entrées arrive, your shoulders are tight from leaning forward, and your eyes are fixed on her lips, searching for visual cues to fill the gaps. You already feel exhausted.

How your brain processes speech in noise

Most people think of hearing as a mechanical process, like a microphone picking up sound. But hearing actually happens in two places: the ears and the brain.

- The ears capture vibrations in tiny hair cells and convert them into electrical signals.

- The brain takes those signals and decodes them into meaning.

With sensorineural hearing loss, the most common form of age-related hearing loss, the hair cells in the ear are damaged over time. This limits the signals that reach the brain. In a chaotic restaurant with overlapping sounds, these signals become increasingly important for understanding speech.

The Cocktail Party Problem

In 1953, communications engineer E. Colin Cherry published a paper that described what he later called the “Cocktail Party Problem:” the challenge of following one conversation when many are happening simultaneously. He was trying to understand how people with normal hearing manage to do it at all.

The answer is a combination of acoustic cues: the spatial location of the speaker, the specific timbre of their voice, the rhythm of their speech, and top-down cognitive processing, where the brain uses context, expectation, and attention to fill gaps in the incoming signal. Healthy hearing gives the brain enough acoustic information to make this work, but it’s still effortful in a noisy environment.

With hearing loss, the acoustic cuesthe brain relies on to make sense of sound are degraded, and the task becomes much harder or impossible. The Cocktail Party Problem is not solved by just making things louder; it is solved by improving the quality of the signal the brain receives, specifically, the ratio of target speech to competing noise.

The golden measurement for hearing aids: Signal-to-noise ratio (SNR)

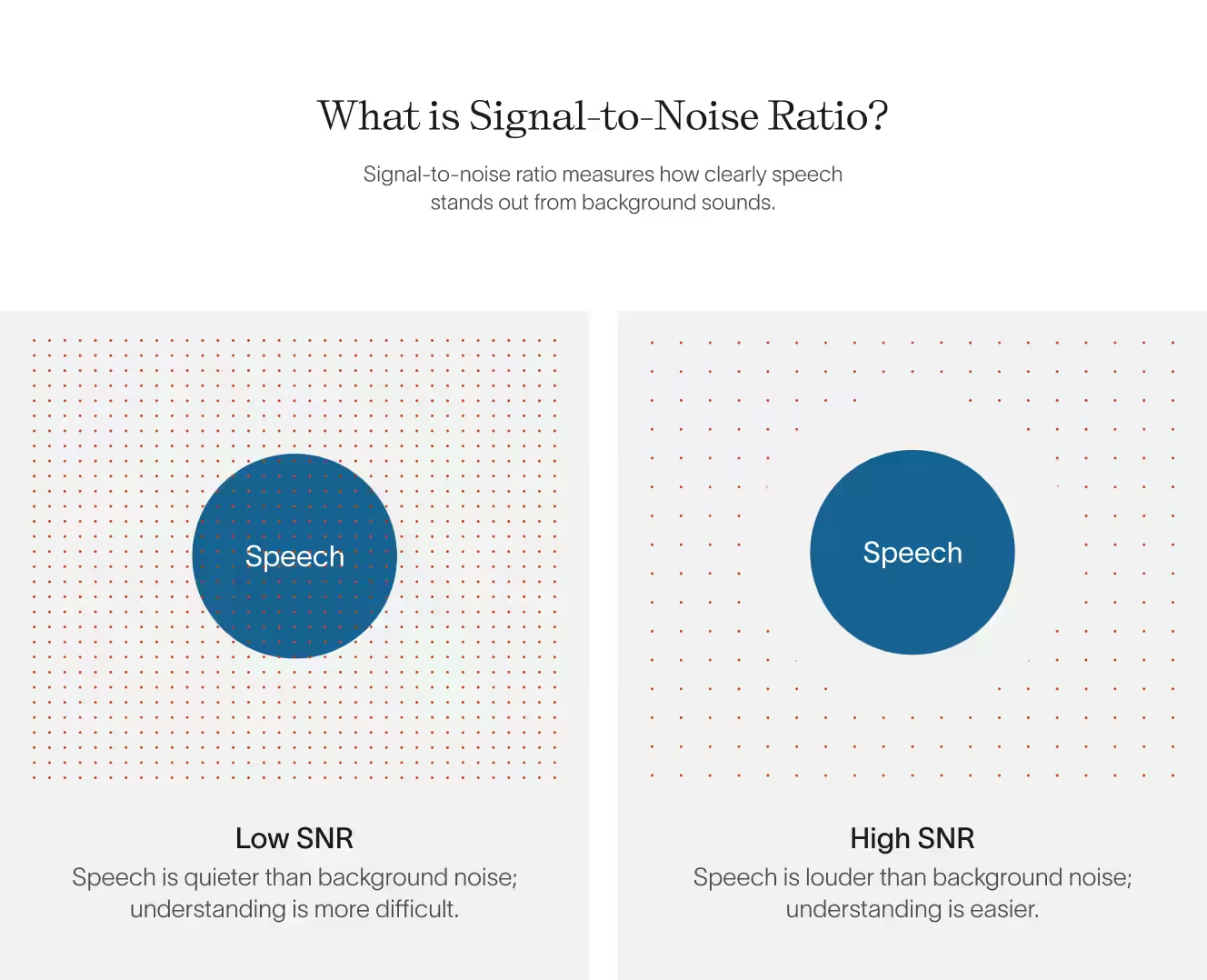

That ratio is called signal-to-noise (SNR). And it’s an important metric determining whether you will enjoy your dinner or have difficulty hearing the signal (your daughter’s voice) above the noise (other talkers, clanking dishes, etc.)

SNR measures how clearly speech stands out from background sounds. With a low SNR, speech is quieter than background noise, and the person speaking is difficult to hear. With a high SNR, speech is louder than background noise, and the person speaking is easier to hear.

SNR is effectively the golden measurement for the “Cocktail Party Problem.” If a technology cannot significantly improve the SNR, it cannot solve the problem.

Why conventional hearing aids struggle with background noise

At their most basic level, conventional hearing aids take an incoming signal and make it louder. This works in a quiet room, but when you step into social settings like a bar, restaurant, or crowded cafe, the technology doesn’t hold up.

If you are in a loud restaurant, the "signal" (your spouse) and the "noise" (nearby restaurant goers) are both hitting the microphone at the same time. When the hearing aid turns up the volume to help you hear your spouse, it also turns up the other voices and distracting sounds right along with it. It makes things louder, but not intelligible.

Denoising algorithms guess at speech vs noise

Engineers attempted to solve this challenge by creating “denoising algorithms.” These algorithms look at the properties of sound to guess what might be “speech” and what might be “noise” based on these properties. For example, speech often modulates. It starts and stops and changes in pitch. Meanwhile, background sounds like the hum of the AC are constant and unchanging. The algorithm can look at these two sounds and decide the AC must be background noise and turn it down.

In a quiet living room, this technology works fine. But in a restaurant, the noise — the laughter, the music, the forty other people talking —also pulses and modulates. To a conventional hearing aid, these sounds appear to be speech too, so the hearing aid boosts it all, creating a chaotic wall of sound.

Restaurants are an acoustic minefield

If conventional hearing aids struggle in general noise, they completely break down in a loud restaurant. Multiple forces converge to shatter the already fragile ear-brain connection.

- Overlapping sounds: As explained above, conventional processors with denoising algorithms rely on the physical characteristics of sound, so they cannot tell the difference between a voice you want to hear and the clinking silverware around you. Both pulse and change in similar ways, so the hearing aid treats them both as important. It amplifies the clinking silverware and the laughter from the table next to you right along with the person you are trying to hear.

- Reverberation: Restaurants are often designed with hard surfaces like glass, tile, and wood, that reflect sound rather than absorb it. Your spouse’s voice doesn’t just hit the microphone once; it hits it dozens of times as it bounces off the walls.

- The Lombard Effect: This is a psychological phenomenon where people involuntarily raise their voices to be heard over background noise. As the restaurant gets louder, everyone speaks louder, making background chatter even more unbearable.

The solution: improving SNR with AI

Because conventional hearing aids process sound based solely on its physical properties, they are unable to solve the Cocktail Party Problem. To a traditional device, your friend’s voice and the background chatter similarly pulse and modulate, making them impossible to separate based on their physical properties alone.

True innovation requires a device that doesn’t just manage sound for the ear, but organizes it for the brain.

AI-driven source separation

Fortell represents a new category of hearing technology. Instead of relying solely on the physics of a sound wave, our technology uses advanced AI models trained on millions of real-world audio examples. We have taught the machine to understand not just what sounds are but where they are coming from.

By interpreting sound through this lens, Fortell can separate speech from noise and selectively amplify only the speech that matters. This is made possible by two foundational breakthroughs:

1. Semantic intelligence and Spatial AI

Using deep neural networks, Fortell recognizes sounds in context. It combines semantic understanding—categorizing speech, noise, and environmental cues in real time—with Spatial AI. By analyzing input from multiple microphones, the system recognizes exactly where sounds are coming from, boosting the person in front of you while reducing interfering voices from other directions.

2. A proprietary chip

Running deep neural networks typically requires the power of a smartphone or a server. To bring this intelligence to a hearing aid, Fortell designed a proprietary AI chip purpose-built for sound processing. This chip delivers vastly more compute than any prior hearing aid, allowing for sophisticated processing while maintaining all-day battery life.

SNR improves by 17db with Fortell AI Hearing Aids

In a clinical trial measuring speech understanding in noise, Fortell delivered a substantial improvement in signal-to-noise ratio (SNR), with gains of approximately 17 dB. In the demo below, you can hear how a talker sounds in a noisy restaurant environment with a conventional hearing aid, compared to the same scene with Fortell’s AI turned on.

Back to the conversation

The true test of any hearing technology isn’t a quiet audiology clinic, it’s a Saturday night at a crowded restaurant. By significantly improving SNR in challenging noise environments, Fortell changes the restaurant experience for hearing aid wearers. The clatter of silverware and din of nearby talkers are relegated to the background, where they belong. The restaurant is no longer a minefield; it’s just a place to have dinner with your daughter again.